Delivers full-stack solutions that seamlessly integrate with mainstream SoCs and sensors, fully supporting the ROS ecosystem. The hardware includes core controllers, while the software features advanced brain and nervous systems, complemented by SaaS services to enhance robot intelligence.

Additionally, mature AMR/AGV/Embodied AI solutions facilitate rapid OEM product development and efficient mass production.

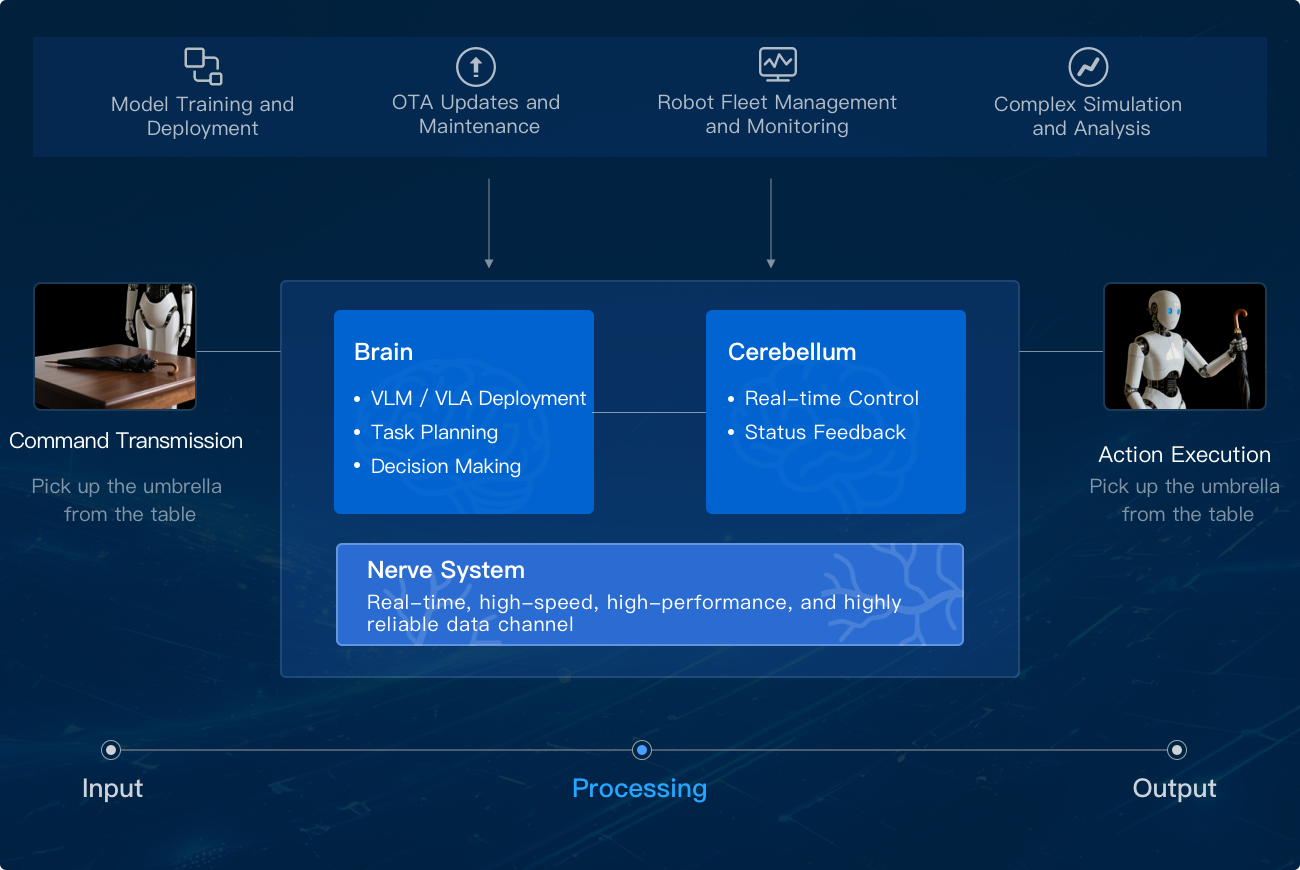

Based on AutoCore's integrated hardware-software robot core controllers and SaaS solutions, our innovative brain + cerebellum architecture combines deeply optimized large models and intelligent agent technologies to deliver a full-stack embodied AI solution.

Builds an OS-level large model inference runtime environment with standardized interfaces and optimization libraries, enabling adaptation and deployment of pre-trained large models of different formats and structures to robot edge hardware.

Provides a unified multi-framework, multi-model inference platform to support and verify the R&D, training, testing, and validation of embodied AI models.

Provides secure boot and FOTA. Secure boot establishes a trust chain, while FOTA technology enables zero-downtime atomic upgrades.

Supports dual-kernel architecture Robot OS that balances hard real-time control (microsecond-level response) with resource-intensive tasks (large model decision-making).

Provides integrated large model inference runtime environment optimized specifically for robot edge heterogeneous computing platforms (CPU + GPU + NPU, etc.), empowering robots to efficiently run and schedule complex AI large models on resource-constrained edge devices, enabling advanced perception and understanding, complex autonomous decision-making, and smooth human-machine interaction at the edge.

Dexterous arm (> 20 DOF) with vision-servo system for micron-level part handling (e.g., chip placement).

Voice recognition, environment perception, continuous dialog, and simple body gestures.

Fuel cap identification and precise manipulator control for complete fueling operations.